I am currently a Postdoctoral Researcher at Department of Computer Science, University of Oxford, working with Prof. Michael Wooldridge. Prior to this, I obtained my Ph.D. degree at NExT++ Research Center, advised by Prof. Tat-Seng Chua in School of Computing, National University of Singapore. I received both my M.S. and B.S. degrees from Wuhan University.

My research focuses on Large Vision-Language Foundation Models, particularly their capability, controllability, evaluability, and robust, reliable reasoning. I am also actively working on multi-agent systems and their integration with foundation models. More broadly, I am interested in Natural Language Processing and intelligent agent research.

I am always happy to discuss potential collaborations — please feel free to drop me an email.

Some of my representive work:|

NExT-GPT:

The first unified any-to-any multimodal LLM, capable of understanding and generating across any modality or combination of modalities (e.g., text, image, video, audio). [PDF] [Github] [Huggingface] [Video] (ICML'24 Oral, selected as a Most Influential Paper by Paper Digest, WAIC Youth Outstanding Paper Award,  , ,

)

)

|

|

|

Any2Caption:

A SoTA framework for controllable video generation from any condition by being the first to leverage MLLMs to interpret diverse inputs into dense, structured captions. [PDF] [Github] [Huggingface] [Video] (Preprint, 2025) |

|

|

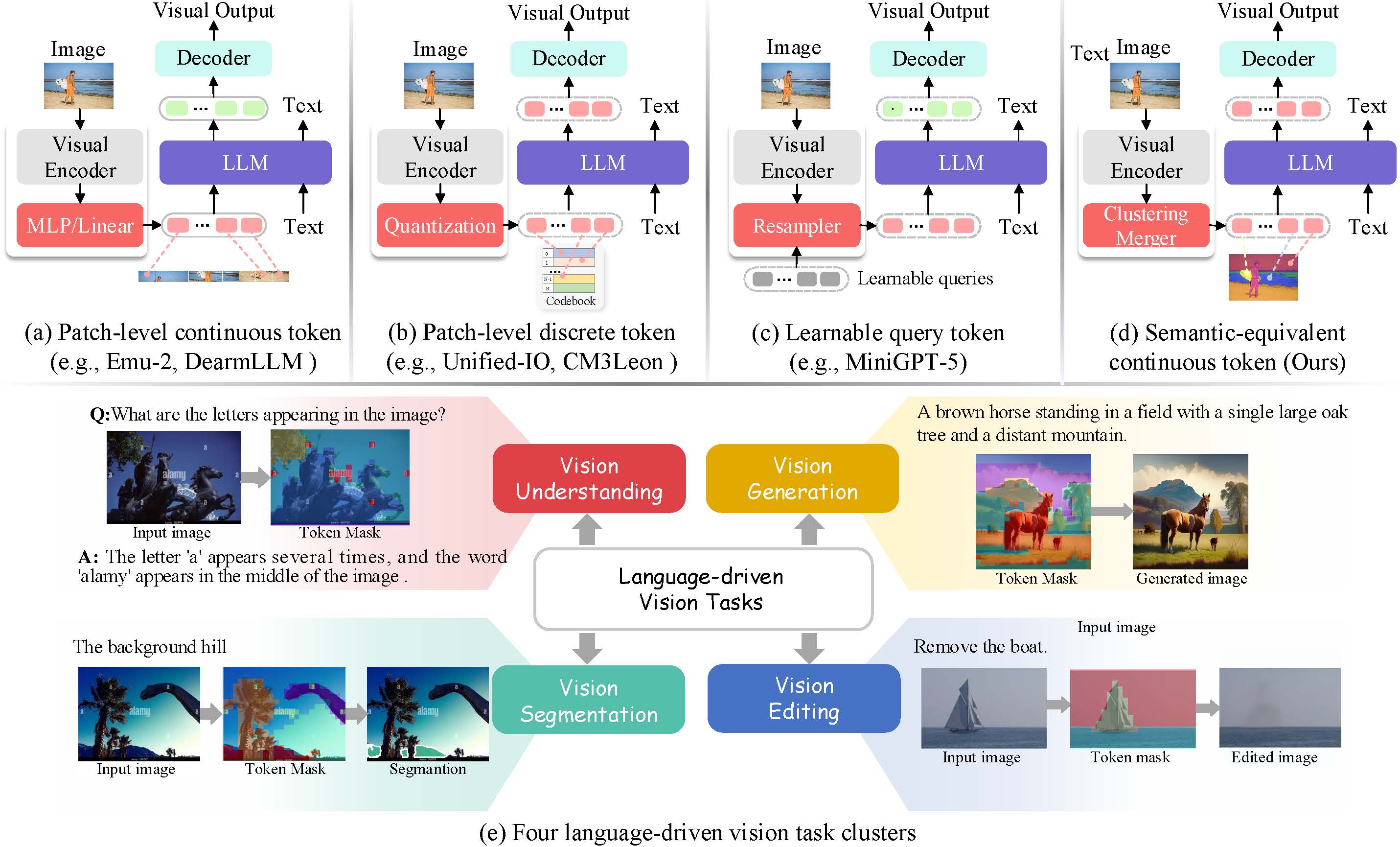

Setok:

The first to propose a general dynamic semantic-equivalent vision tokenizer, fundamentally enhancing the performance bottlenecks of existing MLLMs. [PDF] [Github] (ICLR'25) |

|

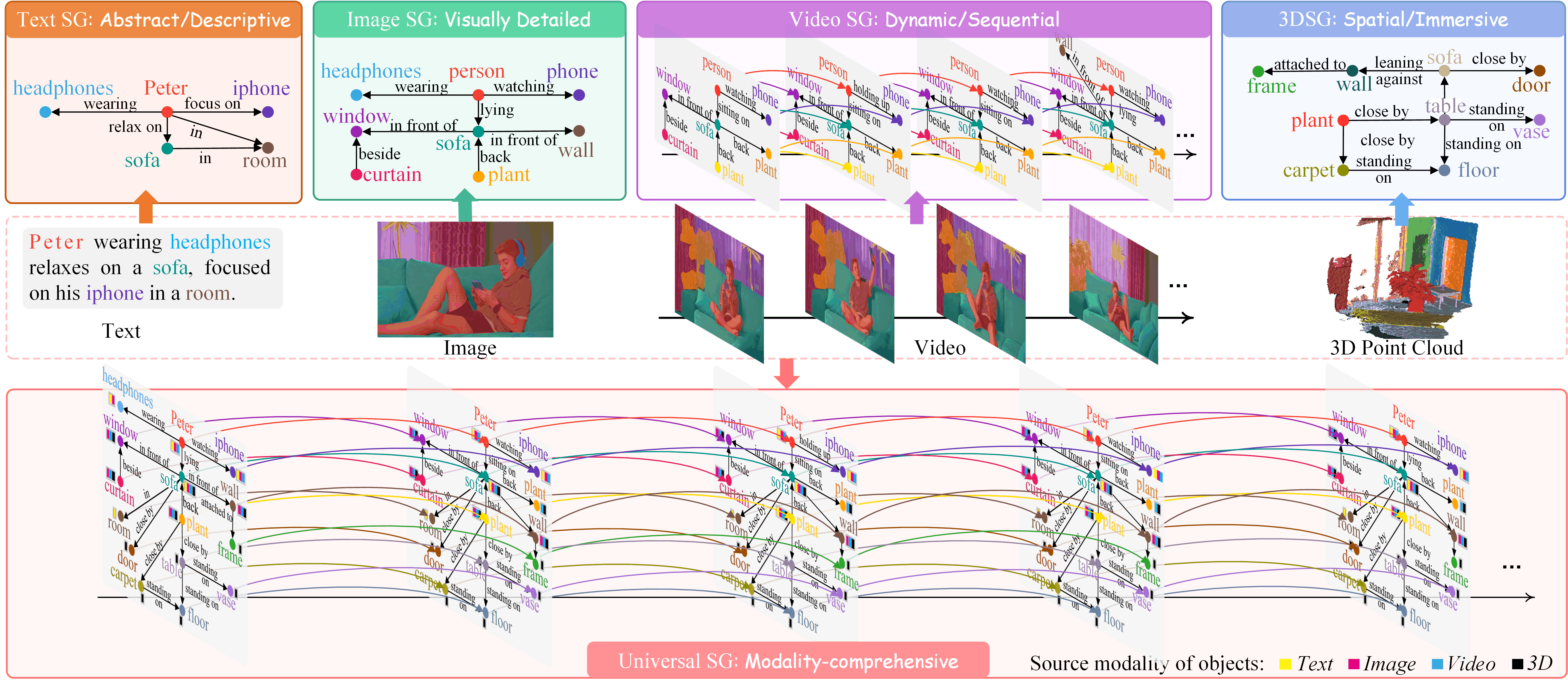

USG:

The first to propose a Universal Scene Graph representation framework that unifies structured semantic scene graphs across modalities including images, text, videos, and 3D. [PDF] [Github] (CVPR'25 Highlight) |

|

|

| 2026 |

|

| 2025 |

|

| 2024 |

|

| 2023 |

|

| 2022 |

|

| 2021 |

Conference ReviewerNeurIPS-23/24/25, ICLR-24/25, ICML-24/25, CVPR-24/25/26, ACM MM-23/24/25, IJCAI-23/24, AAAI-24/25/26, ACL-23/24, WSDM-23

Journal ReviewerIJCV, TPAMI, TKDD, TOMM, IEEE/ACM TALLIP, TCSVT, EAAI, Neurocomputing

|

|

|

Kuaishou Kling Research Advisor: Weicai Ye, Xintao Wang |

|

|

Kunlun Skywork AI, Singapore 2050 Research Advisor: Shuicheng Yan, Director |

|

|

|

... ...

... ...

... ...

|